One of the things I noticed about analog vs digital is that digital TV has a noticeable delay between the time you tune in a channel and the time it's displayed. Analog, on the other hand, can be shown immediately which allows you to flip through channels much more quickly.

I wanted UTSC channels to be shown as quickly as possible and I figured it should be possible to bring the latency reasonably close to that of analog TV. If you already read my standard, you know that the channels are sent in packets taking 1 second each to transmit. My initial idea was to have decoders that immediately start decoding and playing a channel once they see the preamble indicating a new packet. This would involve playing the sound and video immediately, once enough data has arrived. The maximum latency would be around 1 second. This worst-case latency would occur if the decoder tuned in right after the preamble and had to wait for another packet.

Sound obviously carries much less data than video and the standard has the sound being transferred earlier in the packet than the video, so under this proposal the decoder could wait for the sound plus a couple of video frames, and then start playing. Assuming 48 Kbit Opus audio, this would lead to a theoretical minimum latency of just over 45 milliseconds.

However, last night I realized that the minimum latency can't be less than 1 second. I don't believe it's possible to build a good decoder that doesn't wait for a whole packet before it starts decoding. Here are the 4 reasons I believe it's not possible.

Problem 1: No way to find packet preamble

One problem is that there is no way to verify packet validity unless you wait for a whole packet. The "UTSC" preamble that marks the beginning of a packet only works because I added a CRC32 field to check against the rest of the packet. This is because "UTSC" could occur anywhere in the stream, and you don't want the decoder to find a false beginning. Obviously the preamble doesn't matter once you lock onto a station, but you don't want to get garbage by starting the decode process in the wrong place.Problem 2: Can't use FEC to correct errors

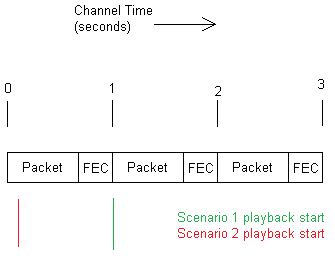

Another problem is that there is 250 Kbit/sec of FEC protecting the data. This amounts to 4/5 FEC. Without an entire packet, you don't get the FEC and so you can't correct any errors. You might argue that only the first packet would be played without FEC and that all future packets would be protected by it. But in reality, because you started decoding the first packet without FEC, you must continue to do so or you risk a brief interruption in the playback. Here is an illustration of this issue, which assumes that no interleaver is used.In Scenario 1, the decoder waits for a whole packet plus the FEC before decoding and playing. In Scenario 2, the decoder waits until just enough data is available before decoding and playing. Notice that if Scenario 2 continues, it will never get to receive the FEC before playing a packet.

Problem 3: Time discrepancy in video compression

The biggest problem in my opinion is the uneven distribution of data inherent to digital video compression, especially the interframe variety used by almost every codec.In analog TV, every element of each frame took the same amount of time, each time a frame was transmitted. There were some tolerances, such as the power grid deviating from 60 Hz or when they lowered the frame rate to add color, but overall it was reasonably precise and unchanging.

In digital video, more data is spent on keyframes than on inter frames. In case you didn't know, keyframes are the initial frame that inter frames build on. The compressor encodes a regular image to start the video, and then frames after that are just differences between the current frame and the initial one. Every so often another keyframe is sent.

If the decoder tries to start decoding before a whole packet is received, then it will most likely fail to play the video properly. This is because much more data is sent in the initial keyframe of each packet than in the rest of the frames. Since the channel bandwidth is constant, this means that keyframes will take longer to send than inter frames.

Since digital frames would be received at indeterminate intervals, you can't just start playing the video as soon as you get the first few frames. If you don't wait for the entire packet, you're very likely to run out of data when a longer frame is being transmitted.

Problem 4: The interleaver

Even though I think #3 is the biggest issue, I saved this one for last because the interleaver is one of the more recent developments. To make this section short, UTSC packets are scrambled by an interleaver, and because the entire packet is scrambled, a receiver must wait until the entire packet is received before decoding it. This means the absolute minimum latency is about 1 second.

Below is a longer explanation of the interleaver.

Although UTSC could be transmitted on any band wide enough, such as 500 MHz or 2.4 GHz, I think it's best suited to the 900 MHz band. The problem is that many smart energy meters transmit FHSS (hopping bursts) all over 900 MHz. Since reliability is the focus of UTSC, I needed a way to somehow filter those. The FEC is good, I think, but it won't fix huge burst errors when every energy meter in a neighborhood transmits over a station.

I decided to use a fully random interleaver, a sort of scrambler. Since this is part of the air interface (the way it's transmitted), it doesn't affect the packet format that I released in 2017.

I generated a large amount of encryption-grade randomness, verified it with a program called ENT, and then used it to generate random integers for interleaver bit positions. This means that once you have a UTSC packet that's ready to transmit, you simply copy bit-by-bit into a new interleaved packet, using the bit positions I generated.

Since there are 1,000,000 (data) + 250,000 (FEC) bits in a UTSC packet, we have 1,250,000 bits, starting at bit 0 and ending at bit 1,249,999. We do NOT want to interleave the "UTSC" preamble, because we need receivers to be able to find it, but we DO want to interleave the CRC32 that comes right after it because we want it to be more resistant to burst errors.

This means we only have to interleave 1,250,000 - 32 = 1249968 bits, numbered from 0 to 1249967. So when we start populating the bits in our interleaved packet for transmitting, bit #1601 from the plain unscrambled packet goes first at position #0, then bit #952398, and so on. Since the pattern is made from high-quality randomness, the bit positions are extremely well distributed.

On the other end, the receiver would have a copy of the interleaver's bit ordering scheme and would work the process backward. To reproduce the original packet, the receiver would take bit #0 from the received packet and put it at bit #1601, and put bit #1 at bit #952398, and so on. At the end, the original packet will have been reconstructed and any burst errors will be evenly distributed over the entire packet, making it easier for the FEC to fix.

Here's a picture showing a 20-millisecond burst error. The drawing is to scale, showing how much that error would damage a UTSC packet. You may want to open the image in another tab and zoom in to see it in detail.

Left: a 20-ms error in a plain UTSC packet

Right: the same error in an interleaved packet.

I didn't know if I wanted to interleave, because I immediately saw that it would prevent instant playback. I wondered if I should leave some of the bit flags un-interleaved so the channel could indicate whether it was interleaved or not, but I realized that an error could flip the flag and confuse the decoder, not to mention the issue with burst errors breaking any non-interleaved channels. In the end, I decided that all UTSC channels will be interleaved.

No comments:

Post a Comment